This post is the latest edition of The Web Untangled, our monthly newsletter. Sign up to receive it direct to your inbox.

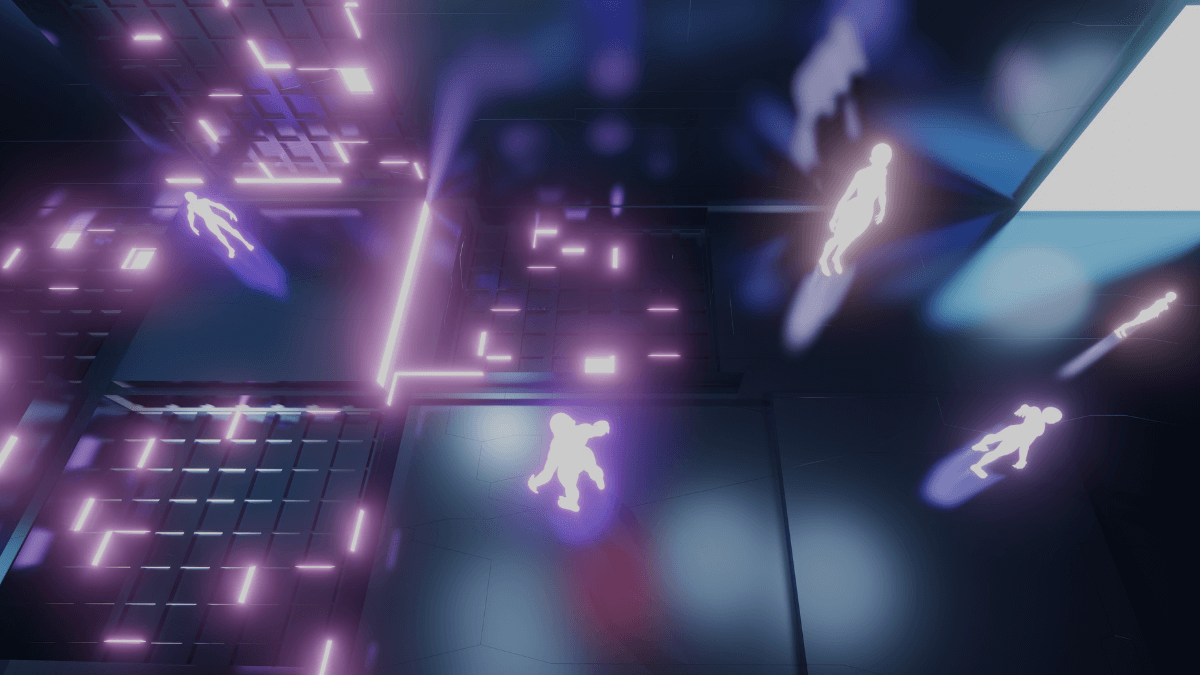

The metaverse is trending. Companies from Meta to Microsoft, Nike to Tinder, are making plays for a future where interaction online takes place primarily in virtual environments.

This immersive virtual world could bring with it the potential to expose us to new worlds, new ideas, and new experiences, taking what Roblox, Second Life, and Minecraft offer to new heights.

But that promise doesn’t come without risk: to our privacy, to our security, to our safety.

Virtual reality has the power to blur the line between what’s real and what’s not. In a persistent, all-encompassing digital world, the sensory experience is heightened, which in turn escalates the experience of harassment, assault, bullying, and hate speech. In fact, research indicates that abuse in virtual reality is “far more traumatic than in other digital worlds”.

We need action now to ensure spaces in the metaverse are designed to keep people safe and curb the abuse and violence that is all too familiar on today’s social platforms. Given that companies fail to stop hate and abuse on their platforms today, doing so in complex, real-time virtual environments will be one of their biggest challenges in the years to come. They must make it a top priority.

The metaverse could create exciting new ways for people to build connections and find community, like the web before it. We can’t allow it to become one more space where horrific abuse and hate is allowed to thrive.

Here we untangle the threat of abuse in virtual reality and consider what can be done to keep everyone safe in our digital future.

Poll Time

We want to know: are you ready to log in to the metaverse?

The challenge with harassment in VR is its presence. It feels real, like a person is stepping next to you and saying and doing things that violate your personal space.

Brittan Heller, founding director of the Anti-Defamation League’s Center on Technology and Society | CNET

Online abuse in the metaverse, by the numbers

7 minutes: Researchers from the Center for Countering Hate found one incident of harassment and abuse on Facebook’s VR Chat every 7 minutes over a 12 hour period (Center for Countering Digital Hate)

4 feet: Meta this month introduced a default personal boundary that prevents avatars from coming within 4 feet of each other in Horizon Worlds and Horizon Venues spaces (Ars Technica)

$50 million: Meta said it has invested $50 million in global research to anticipate safety risks and develop its metaverse products responsibly (The New York Times)

49 percent: 49 percent of women surveyed reported experiencing at least one incident of sexual harassment while using VR products including Oculus Rift, PlayStation VR and Microsoft Windows Mixed Reality (Extended Mind)

80 percent: 80 percent of survey respondents, all of whom regularly use virtual reality, believe individually blocking harassers is the most effective tool to deal with them (Extended Mind)

Fifteen years ago, I was a clean energy investor, when mining coal, oil and gas was mainstream. And look at where we are today. Just like LEED energy efficient buildings became the de facto standard for how we build buildings, I want safety, privacy and inclusion to be three core pillars to how we operate in a digital society.

Tiffany Xingyu Wang,Founder of the OASIS Consortium | TIME Magazine

Critical Questions

What’s the current state of safety?

Virtual reality games and environments are no stranger to harassment, assaults, bullying, and hate speech, and it’s often difficult to report these incidents.

So it was no surprise when beta testers of Meta’s virtual-reality social media platform Horizon Worlds began reporting instances of groping.

Meta says they will continue to improve their user experience to make safety tools easy to find and use. But many question whether the onus should be on the user to keep themselves safe.

🎧 LISTEN: Trust and Safety in Virtual Worlds, Tech Policy Press

What can be done to keep the metaverse safe for all?

WEF has gathered perspectives from companies, academics, civil society experts, and regulators about how to make virtual worlds safe environments.

Elsewhere, Access Now and Electronic Frontier Foundation (EFF) urge companies and investors to identify and address potential human rights risks of extended reality (virtual reality and augmented reality) technologies.

What should be considered a crime in the metaverse?

Philosopher and cognitive scientist David J. Chalmers considers how our moral and legal systems will need to catch up to life in the virtual world.

What about keeping kids safe?

Journalist Catherine Buni speaks to researchers exploring the impact of VR on children to determine whether their still-developing brains are more susceptible to its harms.

There’s so much more to be done here. No one should ever have to flee from a VR experience to escape feeling powerless.

Aaron Stanton,Executive Producer, Quivr; Director, VR Health Institute | MIT Technology Review

The metaverse: a bold, exciting digital future? Or a digital dystopia like we’ve never seen before?

As we continue to grapple with the challenges of today’s web, those building the metaverse must learn from the pitfalls of the past and anticipate the challenges of the future.

If the metaverse really is to be the next generation of the web, we need serious commitment and action now to ensure it is built to prioritise the rights, safety, and wellbeing of everyone—and preventing abuse is just one key area to consider.

For more updates, follow us on Twitter at @webfoundation and sign up to receive our newsletter and The Web This Week, a weekly news brief on the most important stories in tech.

Tim Berners-Lee, our co-founder, gave the web to the world for free, but fighting for it comes at a cost. Please support our work to build a safe, empowering web for everyone.