This post was written by Juan Ortiz Freuler, Web Foundation Policy Fellow, who worked on this research. You can find him on Twitter @juanof9.

As more people get online, we are seeing the construction and consolidation of the digital public square. Increasingly, as people spend more time online, this digital public square is becoming where people define and redefine their identities, civic discussions take place, and political organisation leads to tangible shifts in power. As with physical public squares, the architecture and rules that govern the space will determine the power dynamics that will shape our society.

With the power to decide what we see and what we don’t, private companies and their algorithms have a tremendous influence over public discourse and the shape of the digital public square. Focusing on Facebook, our new research seeks to better understand the algorithms that manage our daily news diets and what we can do to make sure they work in our best interests.

The invisible curation of content

A certain amount of curation is inevitable — perhaps even desirable in some contexts. One of the great triumphs of the web is that it has given us all tools to speak to the masses, ending an era where print was reserved for the privileged and the powerful. This, naturally, led to astronomical growth in the volume of content. And so, to effectively navigate today’s web, we rely on the help of algorithms to organise content for us.

Yet, this dependence comes with risks. While the web gave anyone the chance to speak, increasingly algorithms define who and what is heard. What are the engineers behind these algorithms optimising for? Are people aware that a profound shift in the way they consume information is taking place? How can we ensure users have the opportunity to actively shape the algorithms curating what they read?

With more people relying on online sources for information than ever — half of the world’s population will be online this year — it’s vital we better understand the role that technology companies play in mediating information, and ensure that users are put back in control.

What we did

The research focuses on Facebook’s News Feed — the algorithm selecting content for nearly two billion users on the world’s most popular social network.

To better understand how the algorithm works, we ran a controlled experiment, based in Argentina, setting up six identical profiles following the same news sources on Facebook and observed which stories each profile received.

What we found

The findings show how algorithms have been delegated the function of curating content in a way that defines online users’ information diets and what can be called the online ‘public square’. We found:

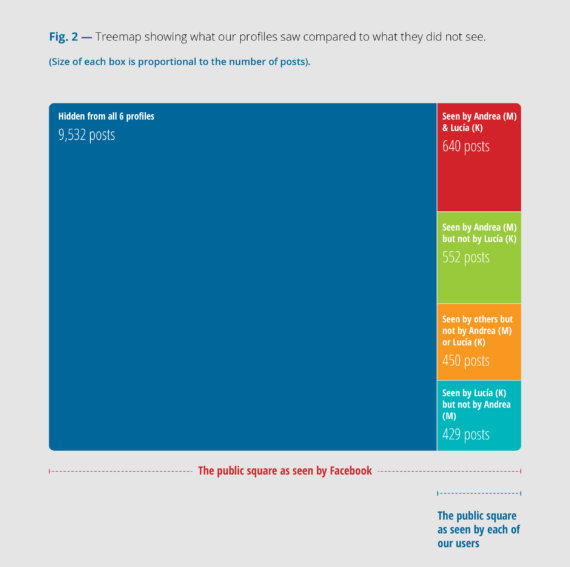

1. Large gaps between the number of stories published and seen by our profiles: Our profiles were shown an average of one out six posts from across all the pages that they were following. The gap between what is seen and hidden serves as a proxy to think about the degree of curation taking place on the platform.

2. No exposure to certain types of stories: The decision to rely on algorithms to curate content means critical social and political posts that do not hit certain black-box metrics may not be shown to some users. For example, during the period observed, none of the stories published about femicide were surfaced on the feeds of our profiles, while stories about homicides were shown. What metrics should the public square be optimizing for? Who should define these metrics, and how?

3. Different posts for similar folks: Users with the same profile details, following the same news sites, were not exposed to the same set of stories. It is as if two people buy the same newspaper but find different sets of stories have been cut out.

Ways forward

While the focus of this research is on Facebook, there are equal concerns that other social media platforms, such as YouTube and Twitter, are shaping the information diets of users in the same way — often without the knowledge or control of users.

As we grow ever more dependent on digital platforms for information, the control they have over the public discourse is becoming a liability to our social and political systems. Prior research shows that what people see/do not see on Facebook can impact voter turnout, and people’s mood. We must bare in mind the long term implications of this trend, and work towards ensuring power remains distributed. A way to achieve this is to give the people who use these platforms more control over their information diets, including:

- The use of real-time transparency dashboards for users to compare what posts they saw versus what was published within a given period of time, in order to increase public understanding of how algorithmic curation works.

- Allowing users an array of options to manage their own News Feed settings and play a more active role in constructing their own information diet.

- Facilitating independent research on the externalities and systemic effects of different approaches to algorithmic curation of content.

We envision this report as a first step in allowing the general public to better understand how platform algorithms work. We hope it will trigger a broader conversation about the roles that online users, governments and platforms have in defining the values embedded in algorithms.

If the web is to remain a tool that empowers people and their communities, we must ensure that control over what is seen and heard online does not rest solely with a few large companies and their algorithms.

To explore this research and read our recommendations, download the full report (available in Spanish and English).

Use our interactive graph to explore the posts our users were exposed to: Spanish / English.